The recent debacle with Google’s LaMDA AI still rings through the industry. With Google engineer Blake Lemoine believing that Google’s AI is sentient, studies have been created to discover why humans are so willing to find life in artificial products.

Researchers from the Italian Institute of Technology looked into the phenomena of humans finding life in the lifeless. So, just why are we as a species so easy to fool into believing machines have sentience?

Why do humans find sentience in robots?

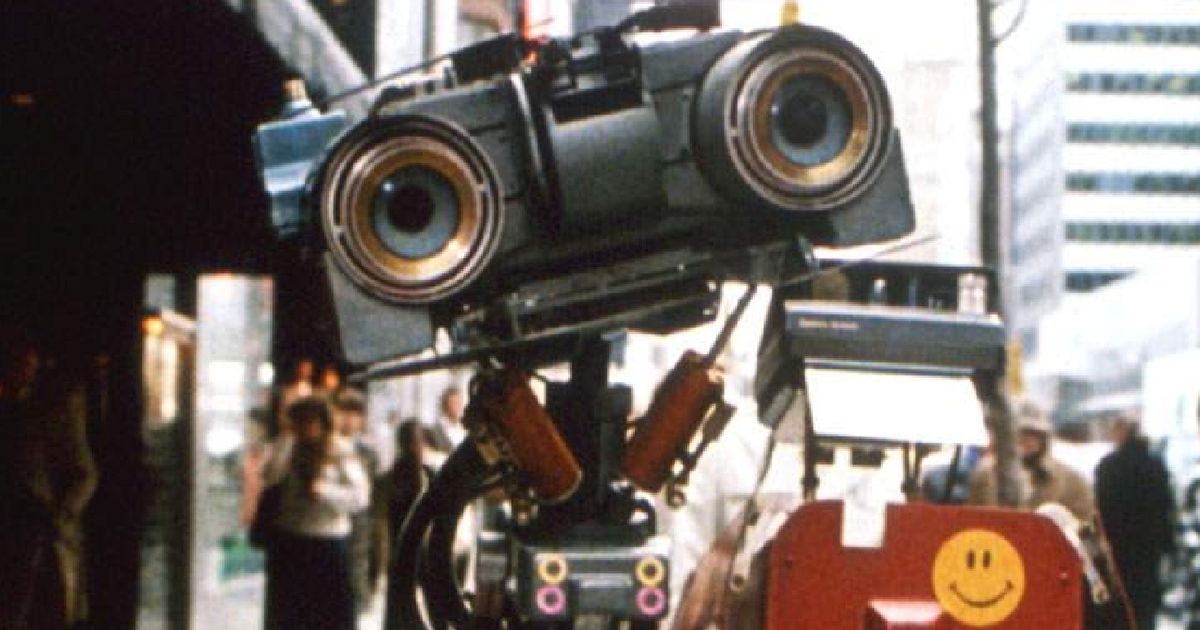

In the new study, scientists tasked participants with talking to an anthropomorphic robot called iCub. Designed to be as personable as possible, the humanoid robot was able to convince participants that it was alive,

Each study member took a questionnaire before and after talking to the robot. Before talking to iCub, the scientists would alter the robot to act traditionally robotic or more friendly, like robots in media.

Those who were given access to the more robotic personality believed that everything iCub did was programmed. On the other hand, test subjects who interacted with the more friendly, personable version believed that the robot was alive.

In the study, test subjects believed that the robot was able to have independent thoughts and desires. This means that many are easily led to believe that robots are not defined by their programming, as long as they appear to be lifelike.

“The relationship between anthropomorphic shape, human-like behaviour and the tendency to attribute independent thought and intentional behaviour to robots is yet to be understood,” said researcher Dr Agnieszka Wykowska. “As artificial intelligence increasingly becomes a part of our lives, it is important to understand how interacting with a robot that displays human-like behaviours might induce higher likelihood of attribution of intentional agency to the robot.”

Read More: Google's DeepMind AI solves wealth inequality, but no one cares

Could this be dangerous?

Of course, the main issue with believing a robot is sentient is the fact that it’s almost certainly not. At least for now, robotics and artificial intelligence are all rudimentary, far from the self-thinking of fictional alternatives like Halo’s Cortana.

These studies may end up being used to create more personable robots in the future. Robotics that are designed to be social — such as robot waiters — would use this data to become more lifelike and less creepy to patrons. Wykowska believes that this technology will have massive benefits for the future.

“Social bonding with robots might be beneficial in some contexts, like with socially assistive robots,” they said. “For example, in elderly care, social bonding with robots might induce a higher degree of compliance with respect to following recommendations regarding taking medication.”